|

Backtesting Description

|

|

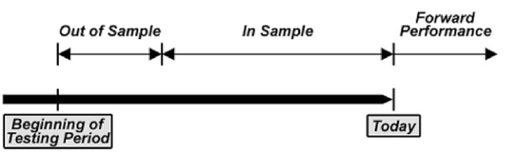

How Do You Develop a Good Indicator, Model or Strategy? Backtest To Establish Its Effectiveness. Backtesting is the process of testing an investment strategy with historical data to ensure its viability before risking any actual capital. An investment in a strategy can be simulated over an appropriate period of time and the results analyzed for the levels of profitability and risk. The backtesting process is the same for indicators, models and strategies and we use these terms interchangeably in the discussion below. The primary goal of backtesting is to simulate real investment experience using historical data. For instance, if we were trying to develop an indicator for the S&P 500 Index, we would use the S&P 500 Index's historical pricing data. First, we would develop a concept, say that if the S&P 500 Index crosses below its x-day moving average, we should sell and if it crosses above its x-day moving average, we should buy. Then we would optimize to determine the value of x (the number of days in the moving average) that would yield the best results using a portion of the historical data (referred to as in-sample data). Let's say the optimized value turns out to be 200 days. Next, we would compare the results of the optimized backtest in-sample results with the results of a backtest with the same strategy and settings (sell if the price of the S&P crosses below its 200-day moving average and buy if it crosses above) in a different sample time period (referred to as out-of-sample data). The backtesting process simulates investment in real-time, since the out-of-sample data were not considered in the optimization. If the profitability and risk characteristics are similar for both samples, then the indicator, model or strategy can be deemed to be valid and robust, and it is ready to be implemented in real-time markets. If the strategy fails in out-of-sample comparisons, then the strategy should be abandoned. Further modifications made to improve the indicator or strategy by going back to the in-sample data create a high risk of "curve fitting" to the historical data, which nullifies the validity of the whole backtesting process and makes it statistically meaningless. |

|

We tested and refined the indicators (as well as our risk model and our strategies) using a portion of our historical data (in-sample testing). We then evaluated the indicators with the rest of our historical data to simulate how they would perform in real life (out-of-sample testing). Normally, the performance of the out-of-sample tests is worse than the in-sample tests. This stands to reason, because inputs could be modified in the in-sample testing to improve the results. Out-of-sample testing is based on the statistically valid notion that it provides an unbiased estimate of the indicator’s future performance. If the out-of-sample results are almost as good as the in-sample results, the indicator has merit. Valid, robust indicators (models, strategies) have common characteristics:

|